Fake voice recordings

8/9/2020

【Line conversations & voice evidence】

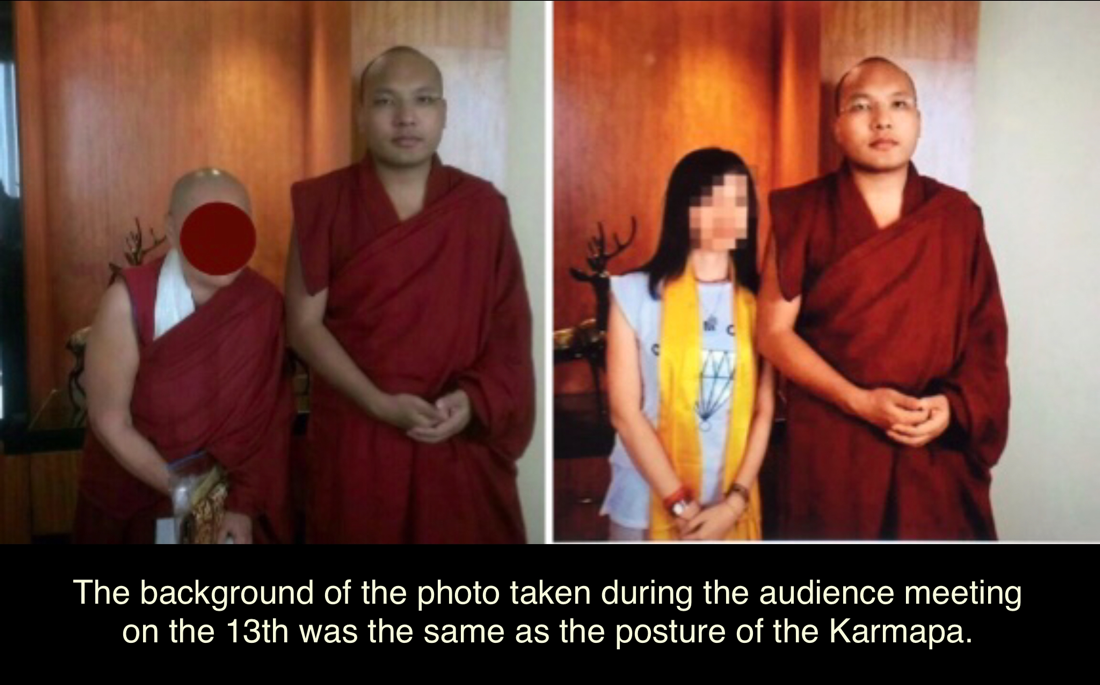

『Wu Hang-Yee revealed the conversation and voice evidence of Karmapa Line and Skype account on Facebook.』Wu Hang Yee’s so-called Karmapa Line & Skye account has been proved to be a fake Karmapa account set up by Wu. Now the account is fake, so Wu’s Line dialogue and voice evidence of the Karmapa proved to be counterfeit.

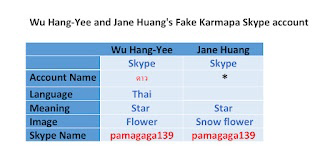

『Taiwan's Jane Huang disclosed to Mirror Weekly a recording of Skype with His Holiness the Karmapa』 Wu and H claimed to have been talking to Karmapa through Skype for many years, The two set up a Skype account with the Thai name "ดาว" ⭐ and the Skype name "pamagaga139", and wrote the content to defame the Karmapa. Jane Huang's Skype account is fake; will the account recordings be authentic?

Vikki Hui Xin Han also claimed that she had an audio call with the Karmapa using the so-called Karmapa Line account. Her so-called Karmapa Line account was Wu Hang Yee's Thai "scum" account. The Line account was fake. Will the recordings be real?

FUTURISM

2. 28. 18 BY PATRICK CAUGHILL

【China’s Google Equivalent Can Clone Voices After Seconds of Listening

One cloned voice fooled voice recognition tech with 95 percent accuracy.】

AI MIMICRY

The Google of China, Baidu, has just released a white paper showing its latest development in artificial intelligence (AI): a program that can clone voices after analyzing even a seconds-long clip, using a neural network. Not only can the software mimic an input voice, but it can also change it to reflect another gender or even a different accent.

You can listen to some of the generated examples here, hosted on GitHub.

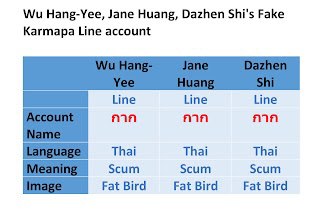

Previous iterations of this technology have allowed voice cloning after systems analyzed longer voice samples. In 2017, the Baidu Deep Voice research team introduced technology that could clone voices with 30 minutes of training material. Adobe has a program called VoCo which could mimic a voice with only 20 minutes of audio. One Canadian startup, called Lyrebird, can clone a voice with only one minute of audio. Baidu’s innovation has further cut that time into mere seconds.

While at first this may seem like an upgrade to tech that became popular in the 90s, with the help of “Home Alone 2” and the “Scream” franchise, there are actually some noble applications for this technology. For example: imagine your child being read to in your voice when you’re far away, or having a duplicate voice created for a person who has lost the ability to talk. This tech could also be used to create personalized digital assistants and more natural-sounding speech translation services.

However, as with many technologies, voice cloning also comes with the risk of being abused. New Scientist reports that the program was able to produce one voice that fooled voice recognition software with greater than 95 percent accuracy in tests. Humans even rated the cloned voice a score of 3.16 out of 4. This could open up the possibility of AI-assisted fraud.

Programs exist that can use AI to replace or alter — and even generate from scratch — the faces of individuals in videos. Right now, this is mostly being used on the internet to bring laughs by inserting Nicolas Cage into the “Lord of the Rings” series. But coupled with tech that can clone voices, we soon could be bombarded with more “fake news” of politicians doing uncharacteristic actions or saying things they wouldn’t.

It’s already very easy to fool swathes of people using just the written word or Photoshop; there could be even more trouble if these technologies were placed into the wrong hands.

2. 28. 18 BY PATRICK CAUGHILL

【China’s Google Equivalent Can Clone Voices After Seconds of Listening

One cloned voice fooled voice recognition tech with 95 percent accuracy.】

AI MIMICRY

The Google of China, Baidu, has just released a white paper showing its latest development in artificial intelligence (AI): a program that can clone voices after analyzing even a seconds-long clip, using a neural network. Not only can the software mimic an input voice, but it can also change it to reflect another gender or even a different accent.

You can listen to some of the generated examples here, hosted on GitHub.

Previous iterations of this technology have allowed voice cloning after systems analyzed longer voice samples. In 2017, the Baidu Deep Voice research team introduced technology that could clone voices with 30 minutes of training material. Adobe has a program called VoCo which could mimic a voice with only 20 minutes of audio. One Canadian startup, called Lyrebird, can clone a voice with only one minute of audio. Baidu’s innovation has further cut that time into mere seconds.

While at first this may seem like an upgrade to tech that became popular in the 90s, with the help of “Home Alone 2” and the “Scream” franchise, there are actually some noble applications for this technology. For example: imagine your child being read to in your voice when you’re far away, or having a duplicate voice created for a person who has lost the ability to talk. This tech could also be used to create personalized digital assistants and more natural-sounding speech translation services.

However, as with many technologies, voice cloning also comes with the risk of being abused. New Scientist reports that the program was able to produce one voice that fooled voice recognition software with greater than 95 percent accuracy in tests. Humans even rated the cloned voice a score of 3.16 out of 4. This could open up the possibility of AI-assisted fraud.

Programs exist that can use AI to replace or alter — and even generate from scratch — the faces of individuals in videos. Right now, this is mostly being used on the internet to bring laughs by inserting Nicolas Cage into the “Lord of the Rings” series. But coupled with tech that can clone voices, we soon could be bombarded with more “fake news” of politicians doing uncharacteristic actions or saying things they wouldn’t.

It’s already very easy to fool swathes of people using just the written word or Photoshop; there could be even more trouble if these technologies were placed into the wrong hands.

【Frequent Al scams in China“use your voice or your face to say what you have never said” 】-Liberty News 2018-11-29

Fraud techniques emerge one after another and even use high technology to defraud money. There are scammers in China who use artificial intelligence (Al) to imitate the voices and faces of others, "Use your voice and your face to say what you haven't said," successful fraud Money.

The Chinese WeChat public account "Geek Vision" recently published an article, "The first batch of AI has begun to scam," talking about the scam technology has been advancing with the times, and some people have fallen into the "WeChat Voice" scam. A woman named Zhao recently received a voice message from her father. He said he had no money to buy vegetables and asked Zhao to transfer 200 yuan. After confirming her father's accent, Zhao transferred the money and returned home to find that she was deceived. Ms. Wang of Cangzhou was also defrauded of RMB 500 for "classmates" borrowing money through voice mail.

The article pointed out that the theft of WeChat accounts mainly causes this kind of scam. Although the forwarding function of the current WeChat version of voice is disabled, scammers can extract voice files or install "enhanced WeChat" to achieve the effect of forwarding voice. Even if you call and ask, the other party can imitate the voice of others through AI technology. "Different tone, emotions can be the same, and real and fake cannot be distinguished."

The article mentioned that Google’s Artificial Intelligence Research Laboratory developed the technology (MILA) established at the University of Montreal in Canada in 2016. This sound synthesis technology, Lyrebird, through artificial neural networks (Neural Network) and machine learning (Machine Learning), can define a person's voice based on various characteristics such as timbre, pitch, syllable, pause, etc., produce a voice more like that person.

The article pointed out that even if you want to crack the scam through video, the other party can also use AI to fake the face of others to scam. The engineer, whose name is "deepfakes" on the Internet, recently changed the face of the Hollywood actress to an adult actress through the artificial intelligence "FakeApp" program. This technology has even been used to create fake news, which has caused considerable controversy.

Fraud techniques emerge one after another and even use high technology to defraud money. There are scammers in China who use artificial intelligence (Al) to imitate the voices and faces of others, "Use your voice and your face to say what you haven't said," successful fraud Money.

The Chinese WeChat public account "Geek Vision" recently published an article, "The first batch of AI has begun to scam," talking about the scam technology has been advancing with the times, and some people have fallen into the "WeChat Voice" scam. A woman named Zhao recently received a voice message from her father. He said he had no money to buy vegetables and asked Zhao to transfer 200 yuan. After confirming her father's accent, Zhao transferred the money and returned home to find that she was deceived. Ms. Wang of Cangzhou was also defrauded of RMB 500 for "classmates" borrowing money through voice mail.

The article pointed out that the theft of WeChat accounts mainly causes this kind of scam. Although the forwarding function of the current WeChat version of voice is disabled, scammers can extract voice files or install "enhanced WeChat" to achieve the effect of forwarding voice. Even if you call and ask, the other party can imitate the voice of others through AI technology. "Different tone, emotions can be the same, and real and fake cannot be distinguished."

The article mentioned that Google’s Artificial Intelligence Research Laboratory developed the technology (MILA) established at the University of Montreal in Canada in 2016. This sound synthesis technology, Lyrebird, through artificial neural networks (Neural Network) and machine learning (Machine Learning), can define a person's voice based on various characteristics such as timbre, pitch, syllable, pause, etc., produce a voice more like that person.

The article pointed out that even if you want to crack the scam through video, the other party can also use AI to fake the face of others to scam. The engineer, whose name is "deepfakes" on the Internet, recently changed the face of the Hollywood actress to an adult actress through the artificial intelligence "FakeApp" program. This technology has even been used to create fake news, which has caused considerable controversy.